AI Voice Agent Compliance: TCPA Legal Guide for Voice AI Builders

Quick Answer

What is AI voice agent compliance? AI voice agent compliance is the legal framework governing AI-generated voice technology under the TCPA, FCC rules, and state laws. The FCC's February 2024 declaratory ruling confirmed AI voices trigger TCPA consent, with violations at $500–$1,500 per call and no cap. Voice AI builders must capture appropriate consent, disclose AI-generated voice at call start, deliver an automated opt-out within two seconds of the initial message, and comply with state AI laws like Colorado's ADMT framework.

Key Takeaways

The FCC's February 2024 ruling confirmed AI-generated voice is an "artificial voice" under TCPA—consent is mandatory

Outbound AI voice calls require prior express consent (informational) or prior express written consent (marketing)

The September 2024 FCC NPRM proposes mandatory AI disclosure at the start of every AI-generated call

State laws, including the Colorado AI Act (effective 2026), may classify most voice AI as "high-risk" with significant compliance obligations

TCPA violations carry $500-$1,500 per call penalties with no cap—a 10,000 call campaign = $15M potential exposure

AI voice technology is advancing faster than the regulations designed to govern it. If you're building, deploying, or investing in conversational AI, voice agents, or AI-powered telephony, you're operating in a regulatory environment that's simultaneously uncertain and high-stakes.

Unlike generic AI compliance guides, this resource addresses the specific challenges voice AI builders face: navigating FCC rules that predate modern AI, preparing for state laws like the Colorado AI Act, understanding when inbound vs. outbound matters, and building compliance into your product architecture—not bolting it on after launch.

Why AI Voice Agents Face Elevated Regulatory Risk

AI voice agents sit at the intersection of multiple regulatory frameworks, creating a uniquely complex compliance landscape:

Legacy laws, new technology: The TCPA was written in 1991 to address "robocalls." The FCC is now applying these rules to AI-generated voices that can hold natural conversations—a technology Congress never anticipated.

The "artificial voice" trigger: Any AI-generated voice automatically triggers TCPA's consent requirements, regardless of how human-like it sounds or whether you're using an autodialer.

State law fragmentation: While federal TCPA provides the baseline, states like Colorado, California, and Illinois are layering additional AI-specific requirements on top.

Platform vs. deployer liability: If you're a voice AI platform, your customers' compliance failures may become your liability. If you're deploying someone else's AI, you can't assume the platform has solved compliance for you.

Enterprise deal-breaker: Enterprise buyers increasingly require compliance documentation in RFPs. Compliance gaps don't just create legal risk—they kill deals.

The FCC's Current Framework for AI Voice

February 2024 Declaratory Ruling

In February 2024, the FCC issued a declaratory ruling that removed any ambiguity about AI voice and the TCPA. The ruling confirmed that:

AI-generated voices constitute "artificial or prerecorded voice" under the TCPA

This classification applies regardless of whether the AI generates speech in real-time or uses pre-recorded elements

All existing TCPA consent requirements apply to AI voice calls

State attorneys general can enforce TCPA violations involving AI voice

Critical implication: Even if your dialer isn't technically an "automatic telephone dialing system" (ATDS), you still need consent because the AI-generated voice itself triggers consent requirements. This catches many voice AI builders off guard.

September 2024 NPRM: What's Coming

The FCC's September 2024 Notice of Proposed Rulemaking signals additional requirements on the horizon:

Proposed AI-Generated Call Definition: "A call that uses any technology or tool to generate an artificial or prerecorded voice or text using computational technology or other machine learning, including predictive algorithms and large language models, to process natural language and produce voice or text communication."

Proposed Disclosure Requirements:

Consent disclosure: When obtaining consent, callers must disclose that calls may include AI-generated content

In-call disclosure: AI voice calls must clearly identify themselves as AI-generated at the start of the call

Text message disclosure: AI-generated text messages must include disclosure of AI involvement

Timeline Note: The NPRM comment period closed in late 2024. A final rule could come in 2026, though the current administration's regulatory priorities may delay finalization. Smart builders are implementing these disclosures now—it's where the law is heading, and it builds consumer trust.

Consent Requirements for AI Voice Calls

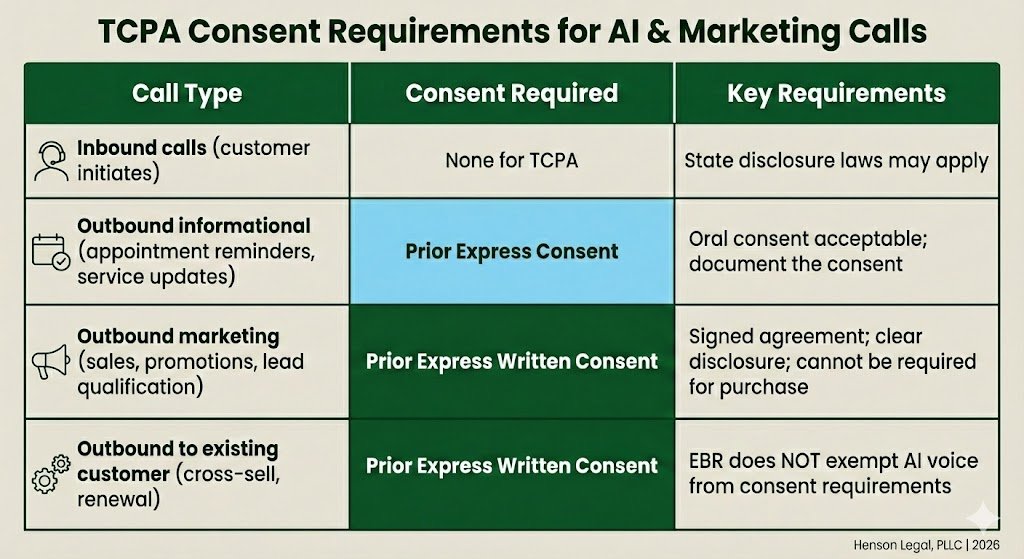

The TCPA creates a tiered consent framework. For AI voice calls, consent is always required—the only question is which level.

The AI Voice Consent Matrix

Critical distinction: An Established Business Relationship (EBR) exempts you from Do-Not-Call rules for manual calls—but it does NOT exempt AI voice calls from consent requirements. The AI voice itself triggers the consent obligation, regardless of your existing relationship with the consumer.

These consent requirements are currently being tested by several lawsuits. For example, the MortgageOne case where an AI voice agent was performing the initial calls and then transferring to the lender. However, the lender didn’t have the proper level of consent and is now facing a lawsuit. But, the whole idea of what is consent is also being questioned. The Fifth Circuit recently said the TCPA statute only requires “prior express consent” and, therefore, the FCC’s regulations around “prior express written consent” are overreaching.

Required Disclosures for AI Voice Calls

Under current rules and proposed regulations, AI voice calls must include specific disclosures at defined points during the conversation. The timing requirements are precise and sequential, so building them into your call flow from the start is far easier than retrofitting them later.

At the beginning of the call, the entity responsible for initiating the call must be clearly identified, along with the individual caller if one is applicable. Under proposed rules, the opening of the call must also include a clear and unambiguous disclosure that the call uses AI-generated voice technology. This is not a buried-in-the-terms disclosure — it needs to happen up front, before any substantive conversation begins.

During or immediately following the initial message, the caller must provide the telephone number of the entity responsible for initiating the call. This gives the consumer a way to identify and reach the party behind the call, which ties directly into the broader accountability framework the FCC is building around AI voice communications.

Within two seconds of the initial message, the caller must also deliver an automated, interactive opt-out mechanism that can be activated by either voice command or key press, along with brief instructions explaining how to use it. The two-second window is tight, which means this mechanism needs to be baked into the call script architecture rather than handled as an afterthought. If your opt-out prompt comes late or is unclear, you are exposed on both the TCPA compliance side and under any state-level AI disclosure requirements that layer on top.

State "mini-TCPA" type laws

Many states have "mini-TCPA" type laws which regulate telephone solicitations to the residents of their states. However, some states also have restrictions around the use of "automatic dialing and announcing devices" which are known as ADADs. Depending on the state, these ADADs may encompass the use of AI voice services.

State AI Laws: The Coming Compliance Wave

Colorado ADMT Framework (Effective Date Pending — Original Law Scheduled for June 30, 2026)

Colorado's AI regulatory landscape shifted dramatically in March 2026 when the state's AI Policy Working Group released a proposed replacement framework that scraps the original SB 24-205's "high-risk AI system" structure entirely. The new framework replaces that concept with "Covered ADMT" — automated decision-making technology — and applies when AI processes personal information to generate predictions, recommendations, rankings, or classifications that materially influence a consequential decision about an individual. The covered domains include insurance, financial and lending services, employment, housing, healthcare, education, and essential government services.

Voice AI builders operating in these verticals should pay close attention. If your AI voice agent qualifies insurance leads, routes consumers to lenders based on creditworthiness signals, pre-screens employment candidates, or otherwise influences a consequential decision in a covered domain, your technology likely falls within the framework's scope. Notably, the framework carves out general-purpose large language models, so the question for platform operators is whether your specific implementation of underlying LLM technology qualifies as covered ADMT based on how it is deployed in a decision-making context.

The compliance obligations are significantly lighter than the original law. The onerous risk management program, formal impact assessments, and rebuttable presumption structure are all gone. In their place, deployers face three core requirements: a clear and conspicuous point-of-interaction notice when ADMT is being used for a consequential decision, a post-adverse outcome notice within 30 days that describes the decision, the role ADMT played, the data used, and instructions for requesting human review, and a three-year minimum record retention obligation covering system version identifiers, change logs, and documentation of material mitigation changes.

The liability model is where voice AI platform operators need to focus. The framework allocates fault between developers and deployers based on relative responsibility under a several liability model. If a deployer uses your technology as intended and contracted for, you share exposure. If they go off-script, the framework may protect you. Critically, any indemnification clause that attempts to shield a party against its own discriminatory use of ADMT is void as against public policy — a provision that directly affects how platform agreements and marketplace terms should be structured.

Enforcement rests exclusively with the Colorado Attorney General's office. There is no private right of action under this framework, and deployers and developers receive a 90-day cure period after notice of an alleged violation. However, this framework must still be enacted into law before the current legislative session ends. If the replacement bill fails, the original SB 24-205 takes effect June 30, 2026, with its full high-risk AI compliance apparatus intact. Monitor the legislative process closely.

Other State Laws to Watch

Illinois Biometric Information Privacy Act: Illinois's law related to the collection, use and handling of biometric identifiers and information by private entities is not the only state law which regulates this sort of data (Texas and Washington do as well), but the Illinois law is the most stringent. The Illinois law includes a private right of action which has led to several class action lawsuits. AI voice providers should be aware of these laws especially when using consumer's voices in a way which may be used to identify the consumer later.

Federal Preemption? The Trump Adminstration has issued an Executive Order designed to pause state level AI laws. However, this Executive Order is likely to be challenged (if not outright ignored).

Wiretapping Laws: Many states require all parties to consent to calls being recorded. AI voice calls are typically recorded and will need to disclose that in the initial call disclosures. Builders should understand which states are “one-party consent” states and which states are “two-party consent” states to adequately get the proper consent.

AI Voice Compliance Implementation Checklist

Consent Infrastructure

Implement consent capture mechanism (web form, in-app, API)

Store consent records with timestamp, IP address, and exact disclosure language

Ensure consent is "clear and conspicuous"—not buried in terms of service

For marketing: Implement signed written consent (e-signature acceptable)

Disclosure Mechanisms

Build AI disclosure into call opening ("This call uses AI-generated voice technology")

Include caller identity and phone number in every call

Implement automated opt-out mechanism within 2 seconds of initial message

Opt-Out & DNC Management

Implement real-time opt-out processing ("immediate" under TCPA)

Maintain internal DNC list with 5-year retention

Scrub against National DNC Registry every 31 days

Documentation & Audit Trail

Log all calls with consent verification status

Record call content (required for some industries)

Maintain consent records for minimum 5 years

Document compliance policies and training

Schedule a 30-minute compliance assessment to review your AI voice product's consent mechanisms, disclosure language, and regulatory exposure.

About the Author:

John Henson founded Henson Legal, PLLC in May 2025 after a career guiding household-name brands through TCPA, state privacy laws, and FTC regulations—including serving as interim General Counsel at LendingTree. He focuses on helping lead sellers and lead buyers manage TCPA vicarious liability risks, and advising AI voice product builders on FCC artificial voice compliance. John's clients span insurance, financial services, and technology companies on the leading edge of customer acquisition.